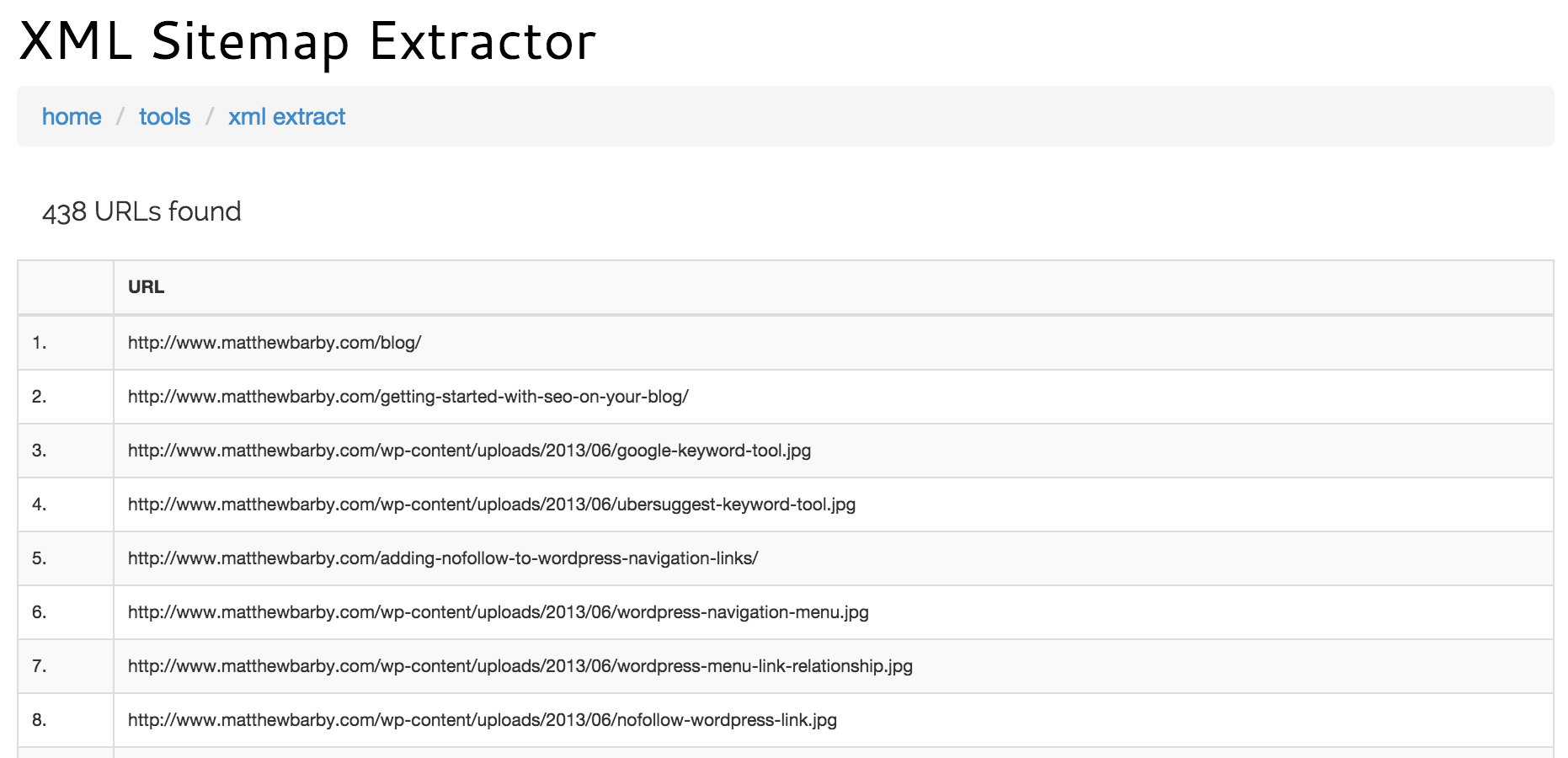

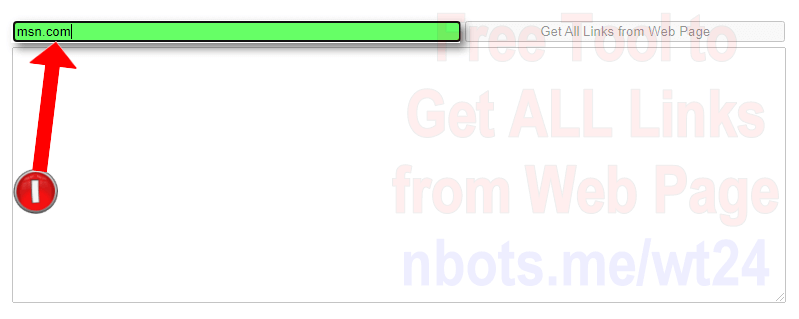

$("a").each((i, e) => console.log($(e). Type on a web page to extract links from url and press Extract. NodeJS has cheerio: const axios = require("axios") Ĭonst $ = cheerio.load((await axios.get("")).data) Ruby has the nokogiri gem: #! /usr/bin/env ruby Open the website you are aiming to scrape pictures from. For Edge users, you can try Microsoft Edge Image Downloader. Sample usage (including writing to file per OP request usage is the same for any script here with a shebang): $. If you’re using the Chrome browser, an image downloader for Chrome will be a good choice. The code was reasonably simple but there’s now an even easier way to solve the same problem using the new Html.Table () function. $parser->parse(get($url) or die "Failed to GET $url") Last year I blogged about how to use the Text.BetweenDelimiters () function to extract all the links from the href attributes in the source of a web page. My $parser = HTML::Parser->new(api_version => 3, start_h => ) The Get details of web page action allows you to retrieve various details from web pages and handle them in your desktop flows. As for the tool’s algorithm, the tool gets the source of the webpage and then extracts URLs from the text. My $url = shift or die "No argument URL provided" Extracting information regarding web pages is an essential function in most web-related flows. The tool is very easy to work with, even for beginners.

Print "$href\n" if $href

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed